KI能编写代码,但技巧才能确保其安全。

我们为企业打造的安全编码平台,赋予您保障人类与人工智能生成代码安全的能力,同时不会拖慢部署速度。

Traditionelle Sicherheitsschulungen konzentrieren sich auf — keine Fähigkeit. Statisches Scannen erkennt Probleme, nachdem sie aufgetreten sind. Um das Softwarerisiko zu reduzieren, muss das sichere Codierungsverhalten verbessert werden Sichere Codierungsfunktionen sind die Grundlage für eine effektive KI-Software-Governance.

Build developer capability for secure AI development

Secure Code Warrior Learning provides AI security training that builds the skills behind every commit. Developers learn to secure AI-generated code through hands-on practice across real-world AI workflows, reducing risk at the source.

大规模构建安全的编码能力

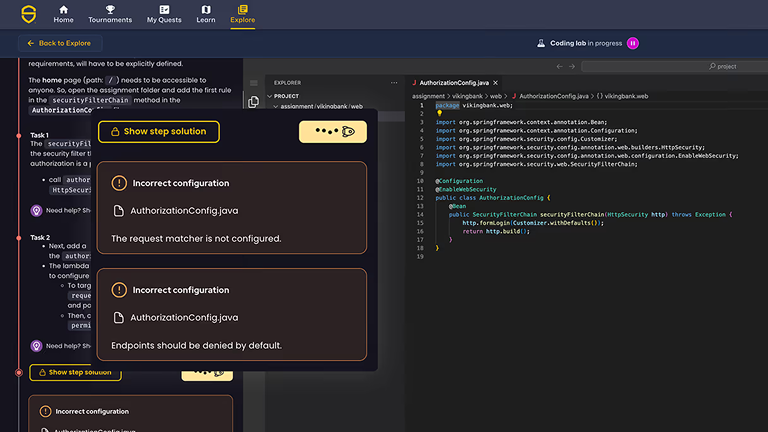

安全编程实践实验室

开发者通过互动练习修复真实安全漏洞,支持75种以上语言和框架。

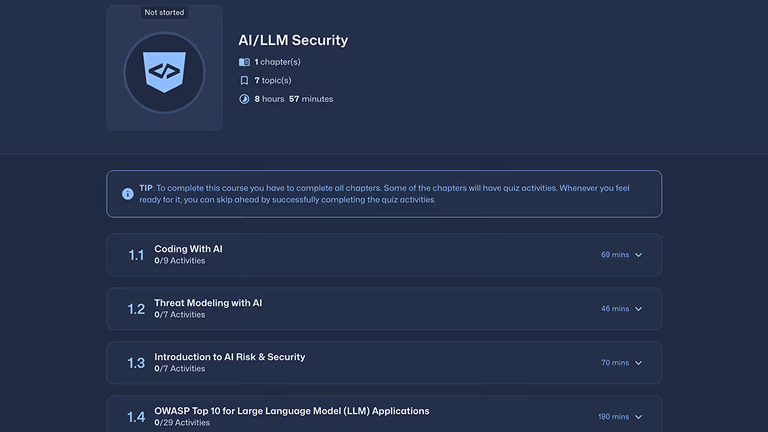

KI专用安全模块

验证并保护人工智能生成的代码,识别不安全的模式,并在人工智能支持的工作流中应用安全标准。

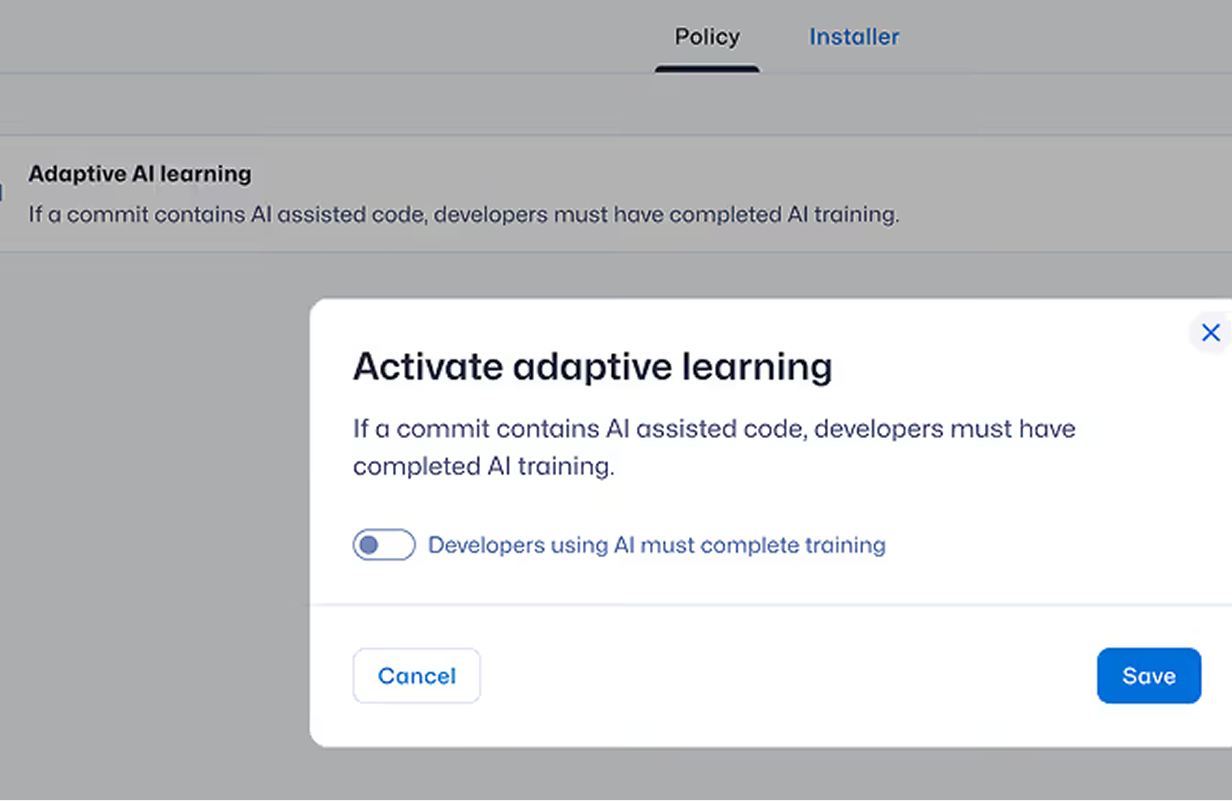

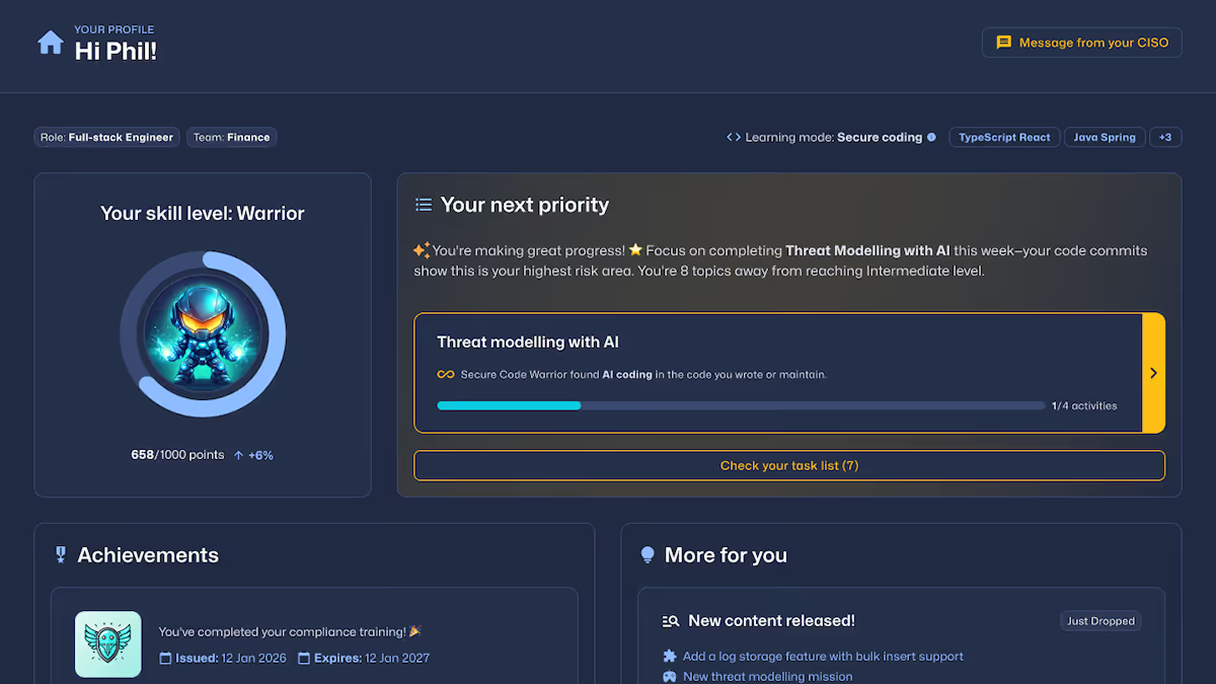

自适应学习路径

根据开发人员的操作行为、风险信号或基准差距,自动分配针对性培训课程。

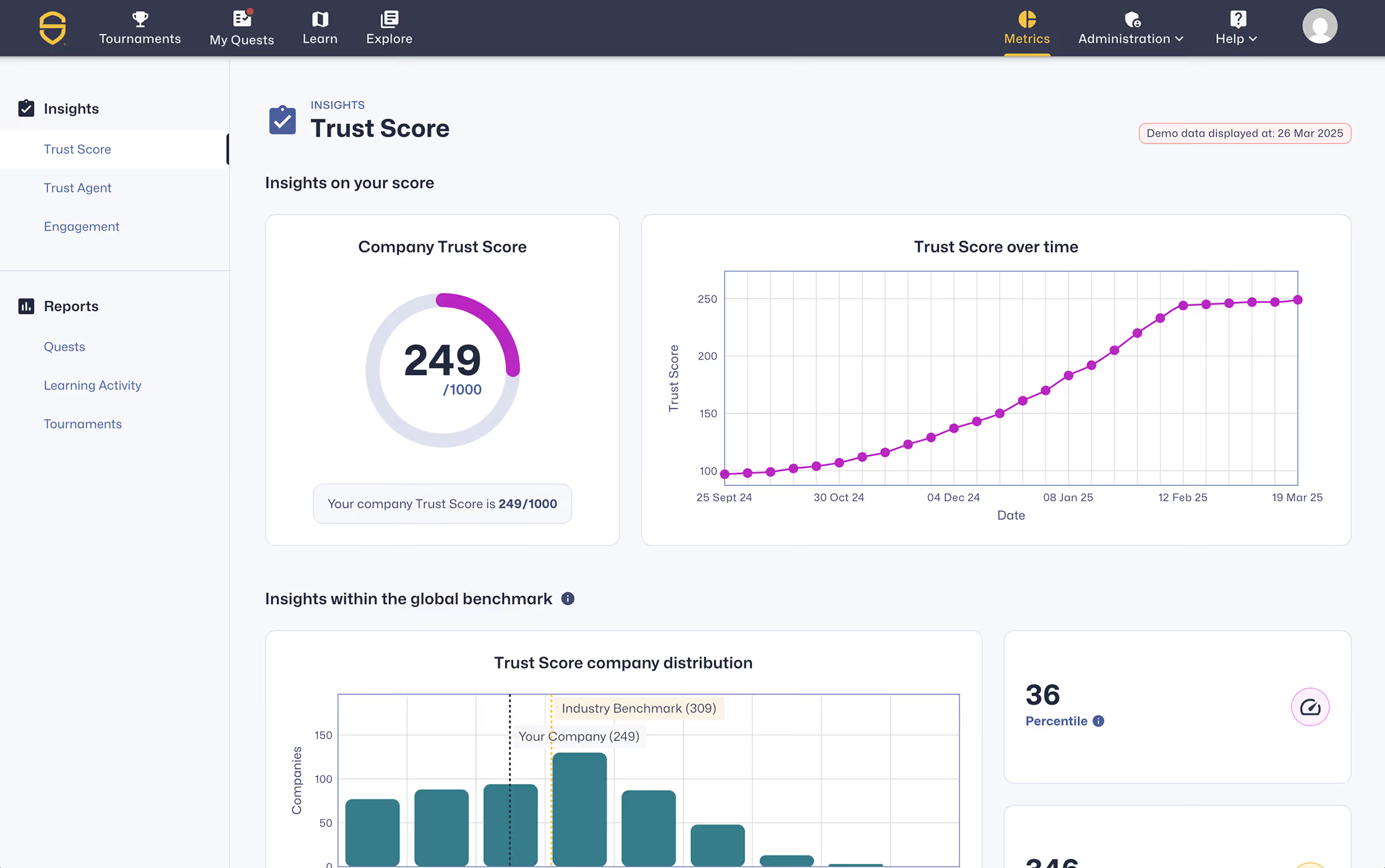

衡量进步

通过SCW Trust Score®评估开发者的能力,将其与同行进行比较,并追踪安全编码方面的可量化进展。

实现合规

根据OWASP十大安全风险、NIST、PCI DSS、CRA和NIS2标准定制培训课程,并提供可审计报告。

人工智能辅助开发的控制层

让人工智能驱动的开发过程变得可视化、安全且具有韧性,在生产前就消除安全漏洞,使团队能够快速且充满信心地采取行动。

任务

编码实验室

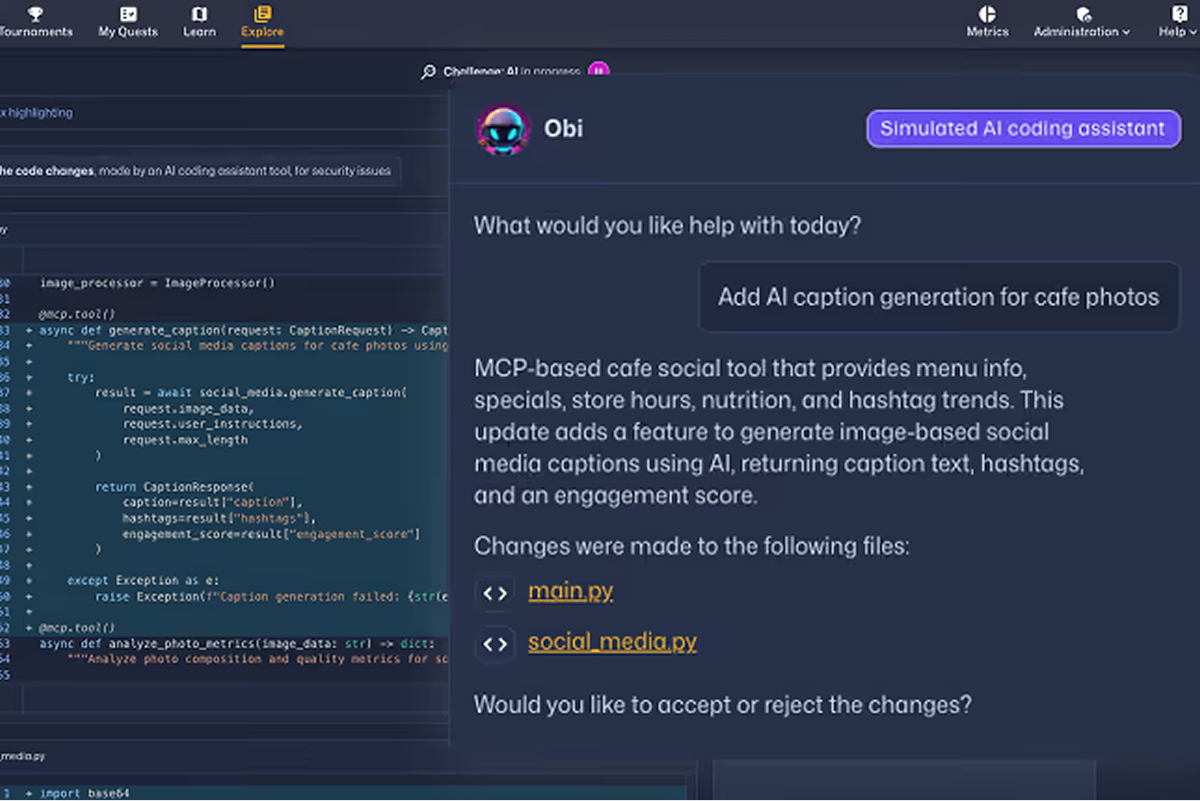

人工智能挑战

Check out the SCW Learning Content Guide which outlines the breadth and depth of training available across the Secure Code Warrior platform, including secure coding vulnerabilities, AI security topics, programming languages, frameworks, and role-based learning paths.

Missions

从源头减少安全漏洞

Secure Code Warrior 减少重复性安全漏洞,强化安全编程习惯,并为开发人员带来可量化的改进。这些成果证明了在现代开发环境中,大规模企业安全编程培训具有可衡量的实际效果。

Zeit für die Sanierung

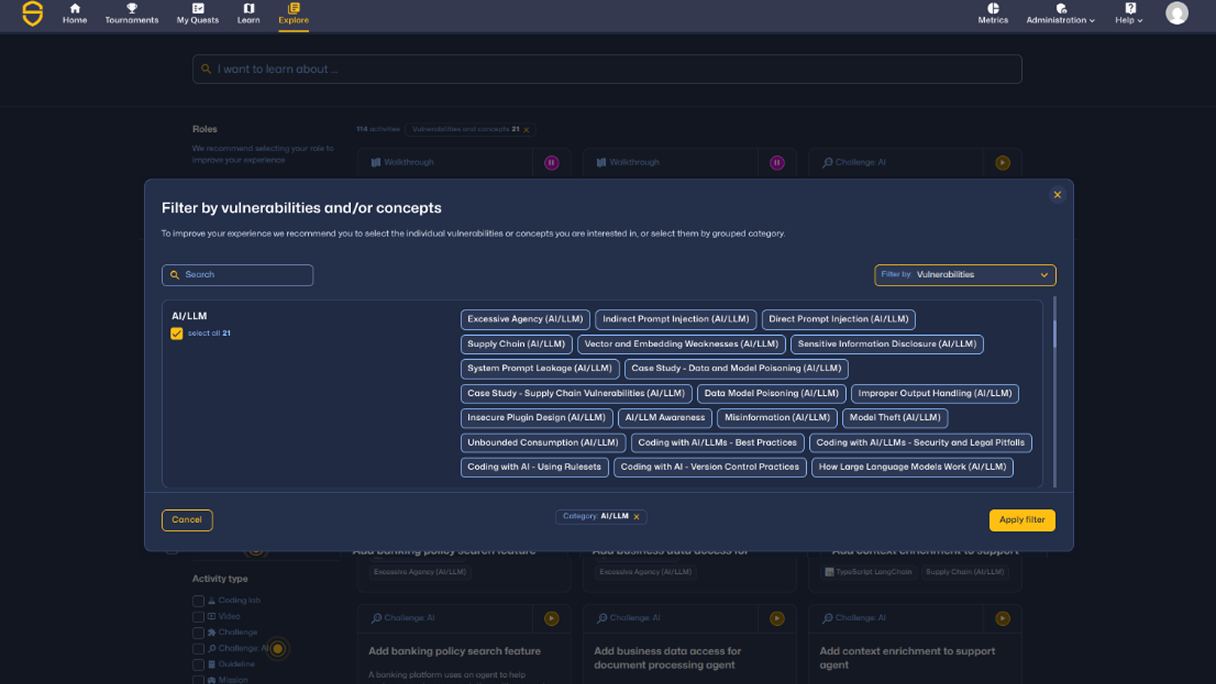

What developers learn in AI security training

Coverage spans LLM vulnerabilities, agent protocols, infrastructure security, and foundational AI security design — mapped to real developer workflows.

Practice real-world AI and LLM security risks.

AI security training teaches developers how to identify, prevent, and remediate vulnerabilities in AI-generated code and modern AI systems, including:

Build foundational AI security knowledge

Developers learn how to securely design and review AI systems through:

Secure AI agents, protocols, and cloud AI environments

Understand and mitigate risks across agent-based systems and AI infrastructure, including MCP and cloud AI services:

Secure AI services and model integrations

Model Context Protocol — Secure AI agents and protocol interactions

专为人工智能治理团队设计

展示可衡量的开发者能力,降低人工与人工智能驱动的开发过程中的软件风险。

安全的代码始于安全的开发者

提升您在安全编程领域的技能,减少引入的安全漏洞,并在整个企业范围内建立可衡量的开发人员信任度。

通过实践学习安全编程来减少安全漏洞

了解Secure Code Warrior 提升Secure Code Warrior 、减少安全漏洞并提供可衡量的风险降低效果。