Commit-level governance for AI software development

Trust Agent operationalizes AI software governance at the point of commit — correlating AI model usage, developer risk signals, and secure coding policies to prevent introduced vulnerabilities before code reaches production.

人工智能正在编写代码。而你的安全控制措施仍落后于时代。

人工智能辅助开发现已融入现代软件交付的各个环节:

- 人工智能编码助手生成可投入生产的代码

- 超越开发者桌面的基于代理的工作流

- 云托管的编码机器人在多个代码库中协同工作

- 前所未有的速度下,实现快速的多语言提交

Traditional scanning detects vulnerabilities after code is merged. Training strengthens developer capability. Neither provides visibility into how code is generated or evaluated before commit.

Trust Agent closes the gap — correlating AI usage, risk signals, and secure coding capability to reduce software risk at the point of commit.

人工智能软件治理的执行引擎

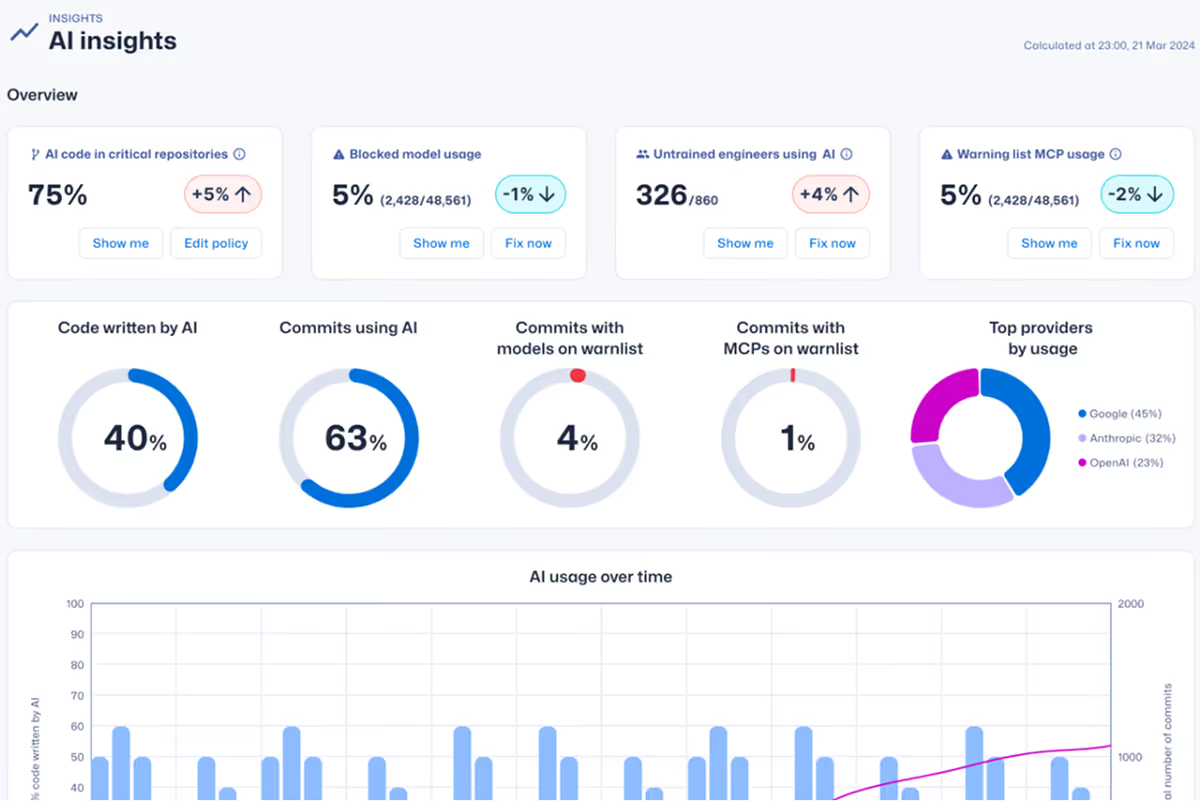

Trust Agent turns visibility into actionable insight. It correlates commit metadata, AI model usage, MCP activity, and governance thresholds to highlight risk at commit — without slowing development velocity.

防范风险。验证控制。加快发货。

Trust Agent可降低人工智能引入的漏洞风险,缩短修复周期,优先处理高风险提交,并在人工智能辅助开发过程中强化开发者的责任担当。

)

提交时的可追溯性

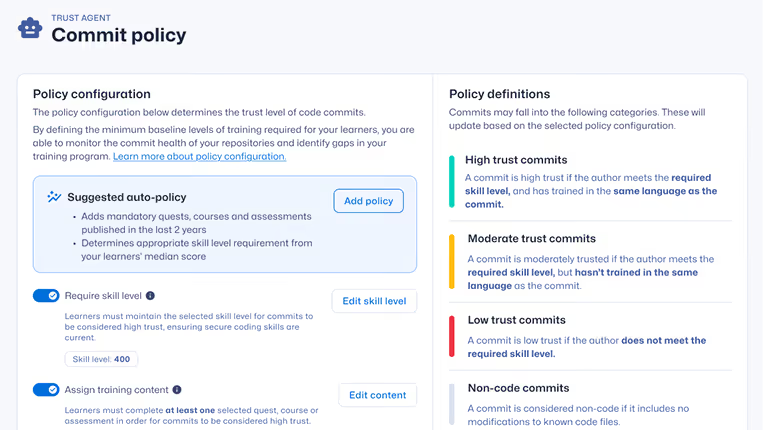

Operationalize governance at commit

Traditional application security tools detect vulnerabilities after code is written. Trust Agent provides visibility into AI-assisted code at commit — correlating AI usage, developer risk signals, and secure coding capability to identify elevated risk before code reaches production.

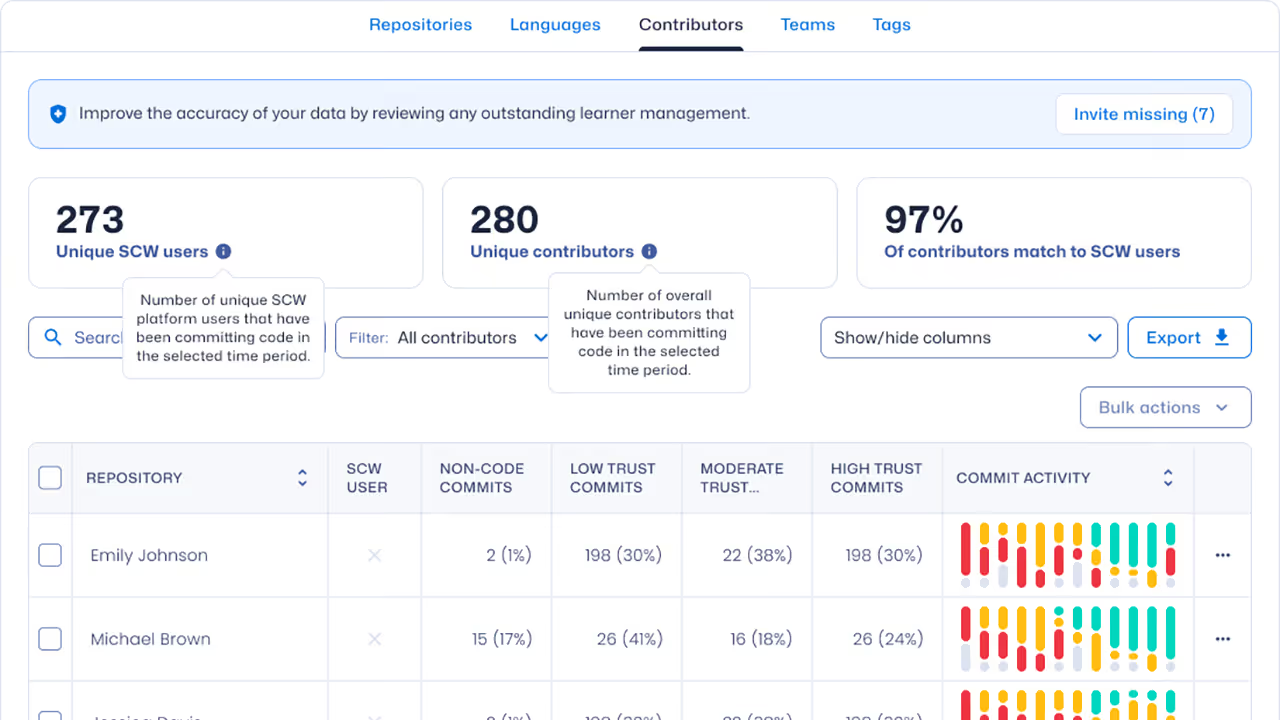

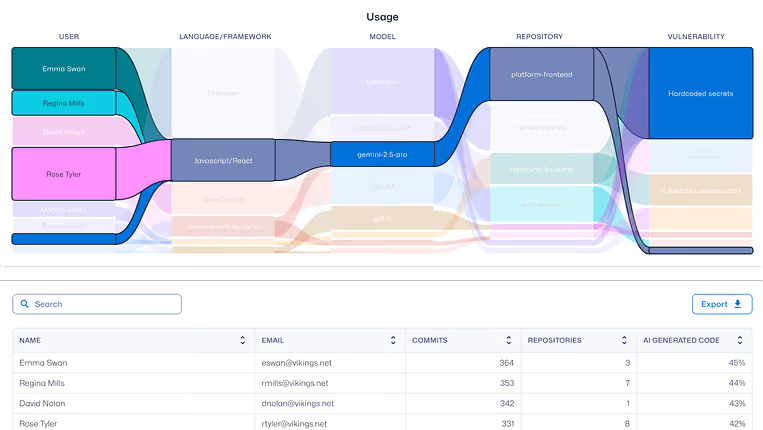

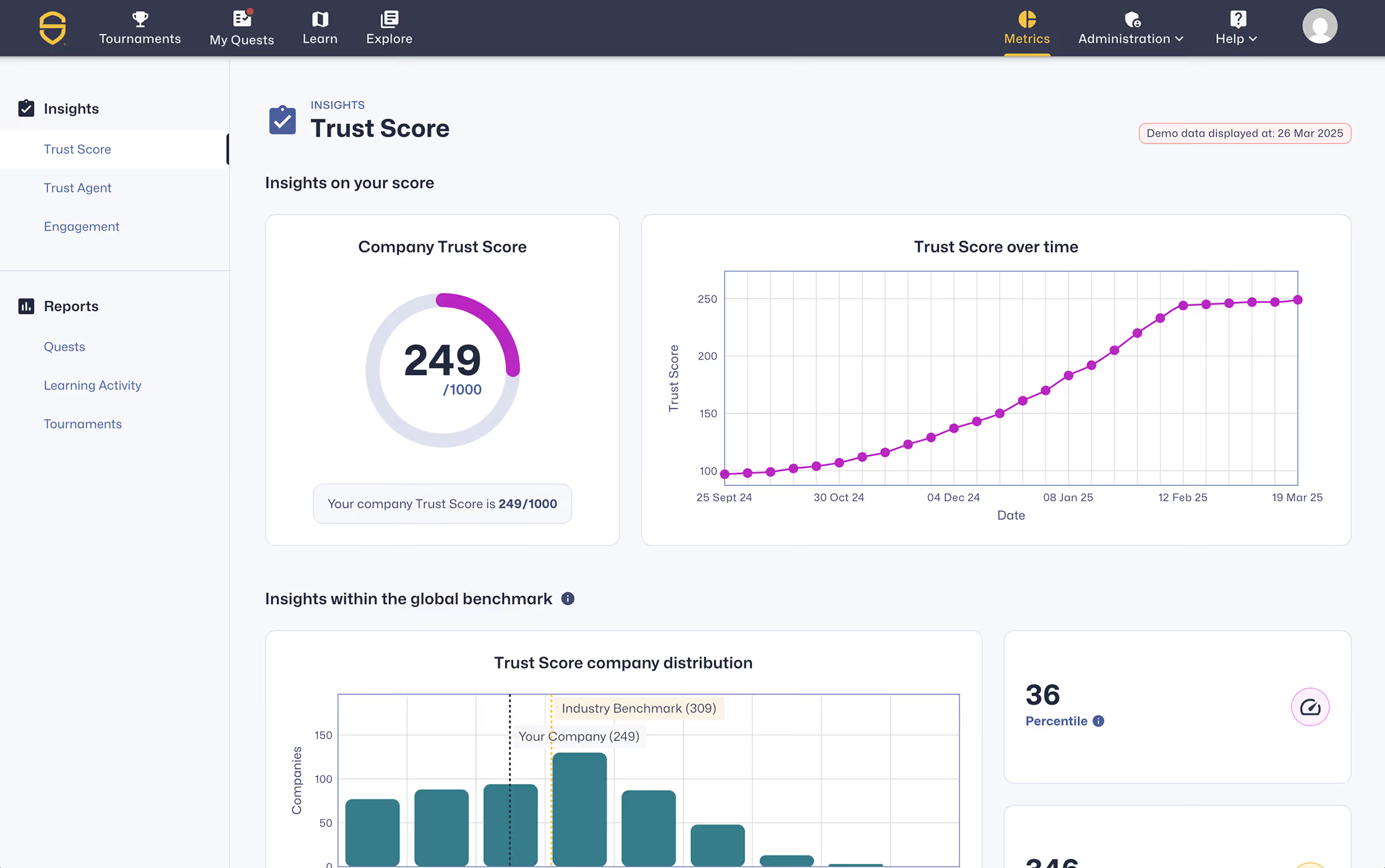

开发者发现与情报

持续识别贡献者、工具使用情况、提交活动以及经过验证的安全编码能力。

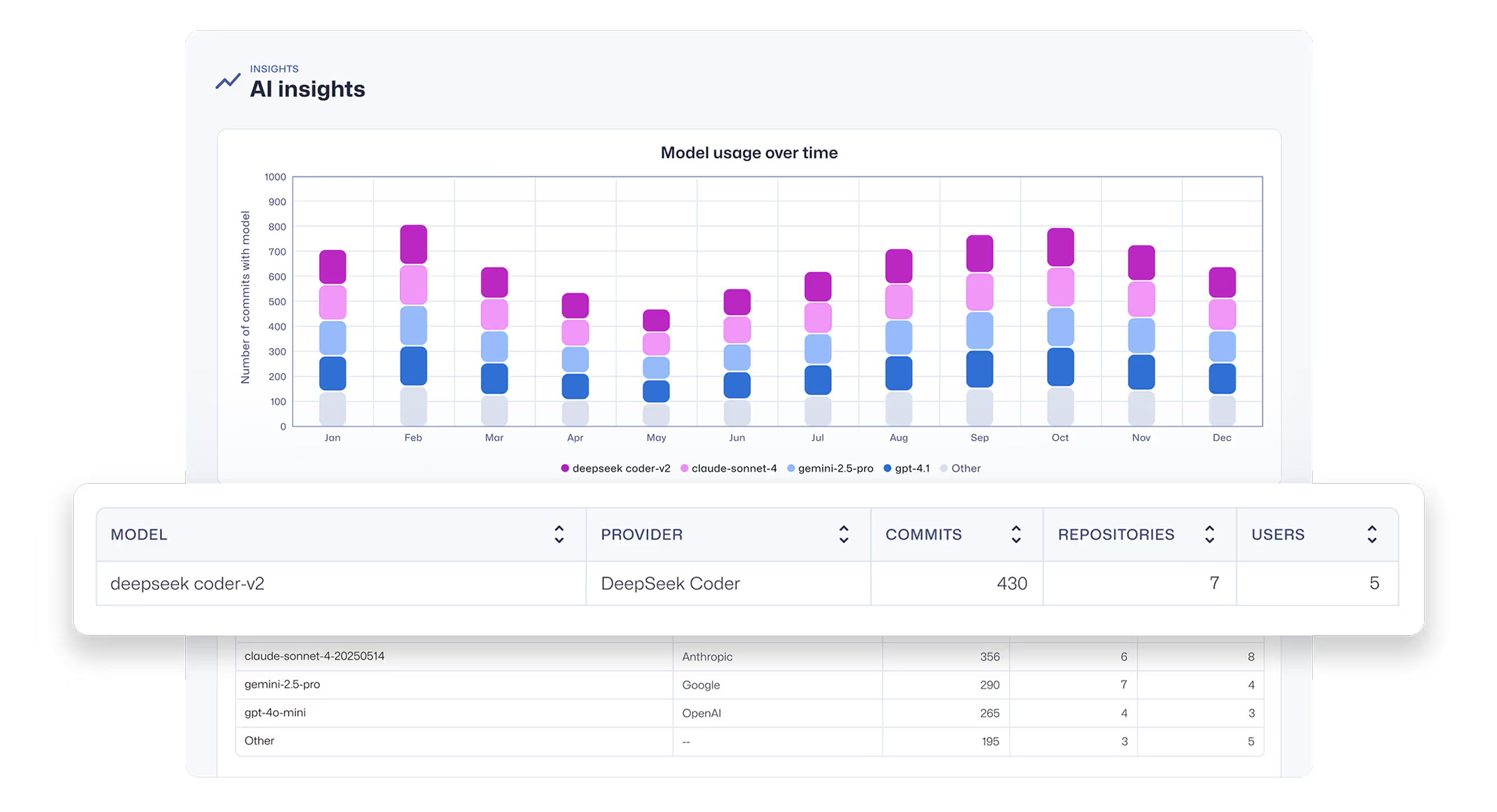

人工智能工具与模型可追溯性

在提交级别保持可见性,以追踪哪些AI工具、模型和智能体在不同代码库中发挥作用。

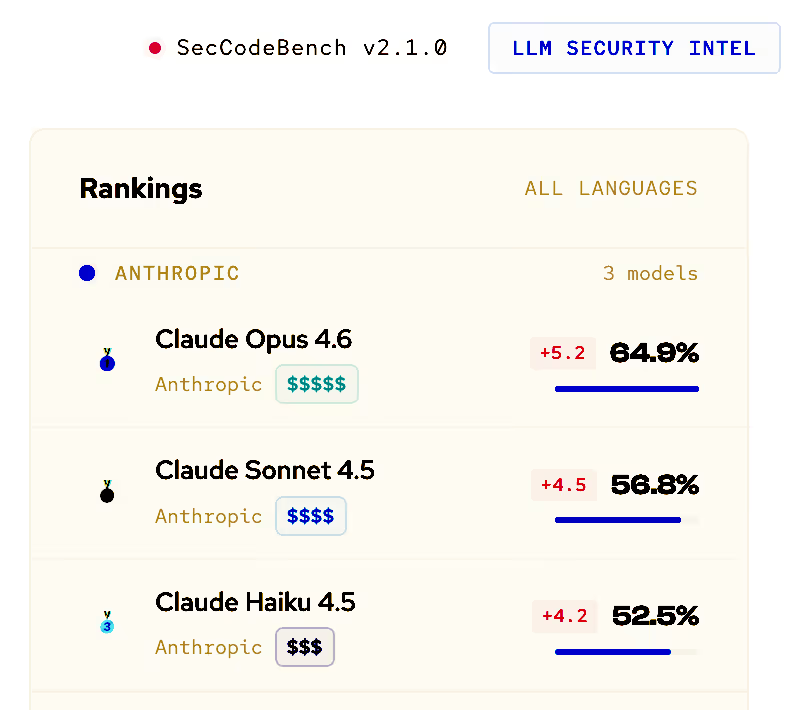

LLM安全基准测试

应用Secure Code Warrior安全基准数据,为批准的人工智能模型和使用决策提供依据。

Commit-level risk scoring

Correlate AI model usage with developer risk signals and secure coding capability to highlight high-risk code contributions.

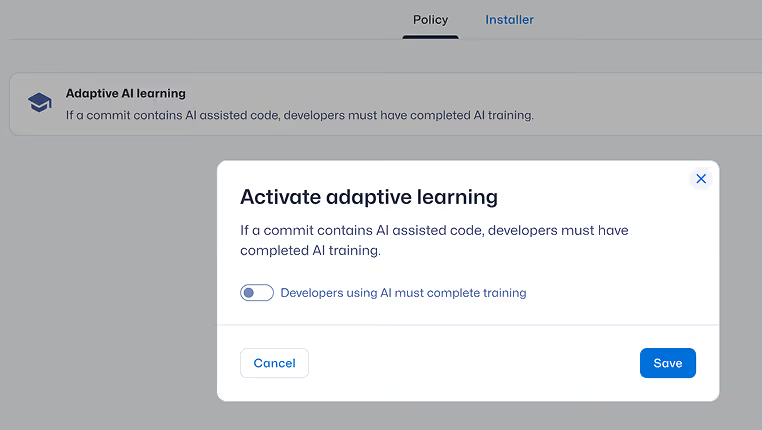

自适应风险修复

通过真实提交行为触发针对性学习,弥补技能差距并预防风险反复发生。

Purpose-built for AI software governance

在人工智能驱动的开发成果发布前进行管控

追踪人工智能影响。关联提交时的风险。在软件生命周期中实施控制。

基于提交层级的AI辅助开发治理机制

了解Trust Agent如何提供提交级别的可见性、开发者信任评分以及可执行的AI治理控制。